Local governments sit on a mountain of public information including municipal codes, ordinances, permitting requirements, and service guides. Yet, hardly anyone can find information they want. Residents call city hall to ask questions that are already answered in a public document. Staff spend time answering the same questions over and over. The information exists; it just isn’t accessible.

Civic AI Agent is our answer. It’s a conversational assistant that lets residents ask questions and get accurate, cited answers drawn directly from official city documents with no hallucinations, no guessing, no dead-end search results.

We recently built a proof of concept for the City of Ruston. The architecture is designed to be replicated for any city, school district, county agency, or public organization.

What Is Civic AI Agent?

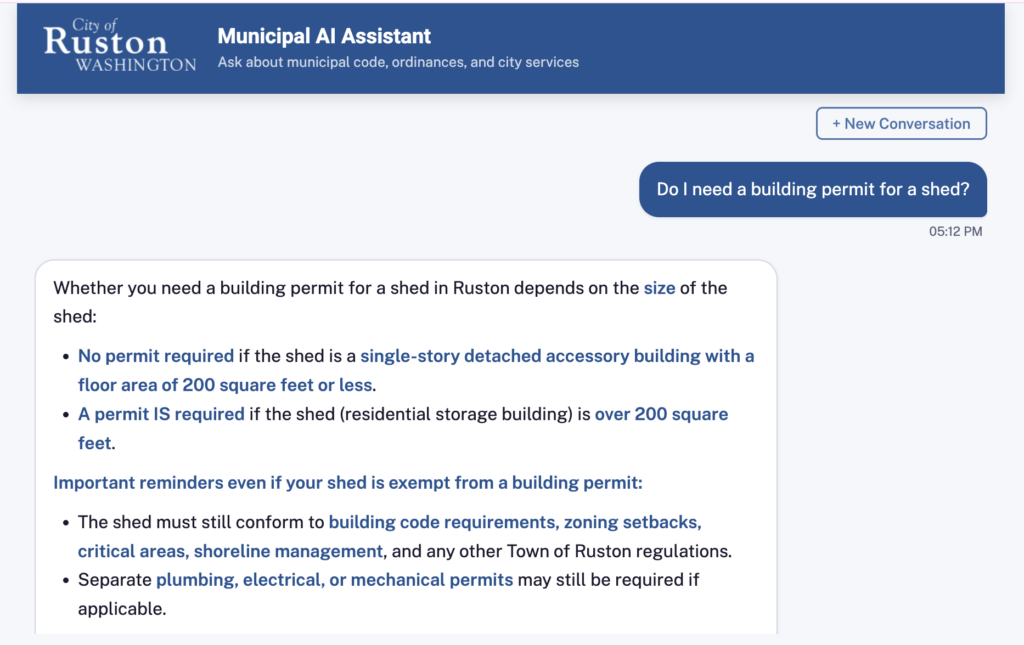

Civic AI Agent is a web-based chat interface built on AWS serverless architecture. Residents type a question like “What are the noise ordinance rules for construction?” or “How do I apply for a home occupation permit?” and receive a grounded answer with citations linking back to the exact section of the municipal code or ordinance that supports it.

The system is not a general-purpose chatbot. It only answers from documents you have explicitly indexed. That means every answer is traceable, auditable, and grounded in official city content.

Who Is It For?

- Cities and municipalities with municipal code, ordinances, city services, permitting

- School districts with policies, handbooks, enrollment requirements, board decisions

- County agencies with zoning regulations, health codes, public records

- Any organization with a large body of public-facing documents that residents or stakeholders need to navigate

Why an Agent? Not Just a Chatbot?

This is the most important architectural decision we made, and it shapes everything about where the product can go.

A chatbot answers questions. If you ask “How do I get a pet license?” it tells you the steps. That’s useful, but it’s still just a search engine with intelligent phrasing.

An AI agent can act. It has access to tools like APIs, forms, databases, external systems. Agents can execute multi-step workflows on your behalf. The difference looks like this:

| Chatbot | Agent |

|---|---|

| “Here are the steps to apply for a pet license.” | “I’ve started your pet license application. Here’s what I need from you to complete it.” |

| “Your utility bill is due on the 15th.” | “I’ve scheduled your utility payment for the 14th. Here’s your confirmation.” |

| “The park permit form is at this link.” | “I’ve submitted your park reservation request for Saturday. You’ll receive a confirmation by email.” |

Civic AI Agent is built on Amazon Bedrock Agents, which provides the orchestration layer that makes this possible. In its current form, the agent answers questions grounded in city documents. But because it is a true agent, not a chatbot, it can be extended with action groups that connect to permitting systems, payment processors, scheduling platforms, or any REST API the city exposes.

The foundation is already in place. Adding new capabilities is a matter of connecting new tools, not rebuilding the system.

In Practice: The City of Ruston

The City of Ruston proof of concept indexes the full municipal code and key pages from the city website. Residents ask questions and receive cited answers linked back to the source document. The City Clerk’s office manages the knowledge base, monitors resident interactions, and identifies documentation gaps through the admin portal — no engineering involvement required for day-to-day operations.

The proof of concept is the first deployment of the architecture. As it matures, we will share what we learn about resident usage patterns, the kinds of questions that surface documentation gaps, and how agent-based civic tools perform in a real municipal context.

Key Capabilities

Natural Language Q&A with Source Citations

Residents ask questions the way they would ask a person. The agent retrieves the most relevant document chunks, synthesizes an answer, and cites the source including a direct link to the original document.

Retrieval-Augmented Generation (RAG)

Civic AI Agent uses the RAG pattern, the industry standard for grounded AI responses for internal resources. Rather than relying on a language model’s training data, every answer is assembled from documents you control. The model cannot fabricate information that isn’t in your knowledge base. We use Bedrock Knowledge Bases, which can crawl websites or point to specific knowledge stores.

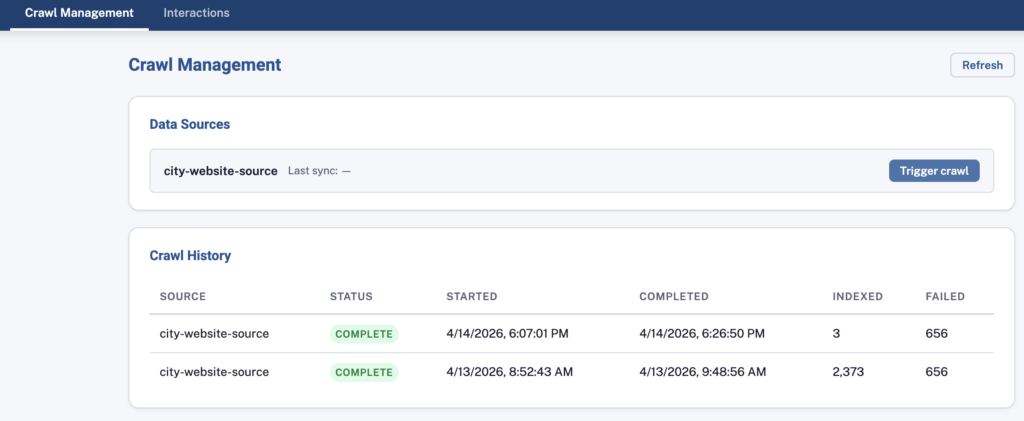

Admin Portal with Knowledge Base Management

City Clerk’s staff manage the system through a secure admin portal. They can trigger crawls of city websites, monitor indexing jobs, and review every interaction residents have had with the assistant. No engineering involvement is required for day-to-day operations. They never need to use the AWS console to manage their data.

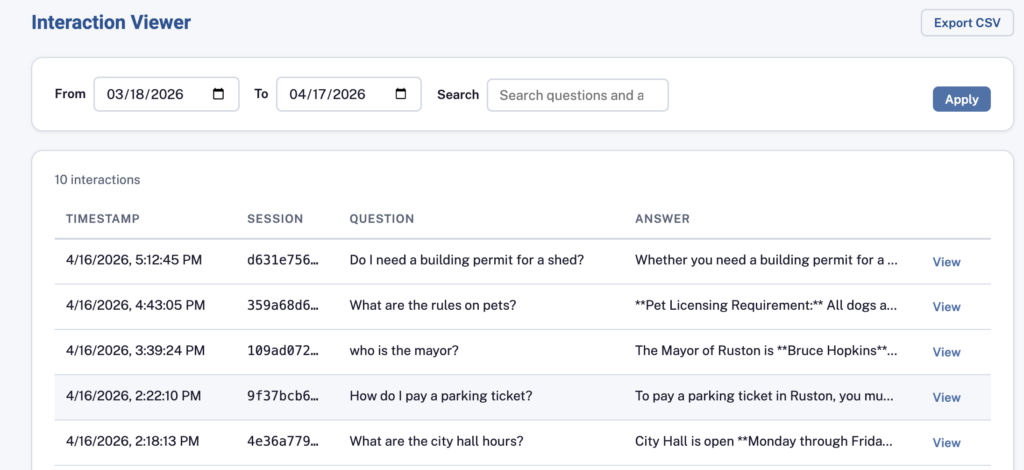

Interaction Analytics

Every question and answer is stored in a structured database. Administrators can browse and search all interactions to identify common resident questions, discover gaps in documentation, and improve city services.

All interactions pass through Amazon Bedrock Guardrails, which filter harmful content, block prompt injection, and keep responses within the scope you’ve defined, a non-negotiable for a public-facing agent representing the city.

AWS Serverless Architecture

Civic AI Agent is built entirely on AWS managed services. There are no servers to patch, no infrastructure to capacity-plan, and no operational overhead beyond the application itself. It scales automatically from zero to thousands of concurrent users.

![DIAGRAM: Architecture overview — Amplify → API Gateway → Lambda → Bedrock Agent → Knowledge Base → DynamoDB]](https://techreformers.com/wp-content/uploads/2026/04/image-5-1024x722.png)

Infrastructure as Code with AWS CDK

Every resource in the system, Lambda functions, API Gateway stages, DynamoDB tables, Cognito user pools, CloudWatch alarms, is defined in code using AWS Cloud Development Kit (CDK). There is no manual console configuration. The entire infrastructure can be deployed from scratch with a single command.

CDK lets us write infrastructure in TypeScript, the same language the team already knows. Constructs are composable, type-safe, and version-controlled alongside the application code. When a new customer needs a deployment, the template is forked, a configuration file is updated, and `cdk deploy` stands up a complete, production-ready environment.

This approach means:

- Repeatability – every deployment is identical; no manual steps that can be forgotten or misconfigured

- Auditability – infrastructure changes go through code review, just like application code

- Teachability – new engineers can read the CDK stacks and understand exactly what is deployed and why

One Resource Per Layer — Environment Isolation Without Duplication

Rather than deploying entirely separate infrastructure stacks for dev and prod, each AWS service provides its own isolation mechanism:

- Bedrock Agents: One agent with two aliases — a draft alias routes to the in-development version (dev), a pinned alias routes to the stable promoted version (prod)

- Lambda: One function per handler with `dev` and `prod` aliases — the dev alias always tracks the latest version; the prod alias is explicitly promoted after testing

- API Gateway: One REST API with `dev` and `prod` stages — stage variables route each request to the matching Lambda alias at runtime, with no code duplication

- Amplify: One app with `dev` and `prod` branches — the branch determines the environment automatically

- DynamoDB / Cognito: Separate tables and user pools per environment — data and authentication isolation is required at these layers

One codebase. Two environments. No duplicated infrastructure stacks.

This pattern eliminates configuration drift between environments, reduces infrastructure cost, and mirrors exactly how AWS intends these services to be used.

Infrastructure changes (CDK stacks) follow the same review-and-promote pattern through AWS CodePipeline with an audit trail, approval gates, and a fully automated path from development code commit to production.

Observability Built In

Every deployment includes CloudWatch dashboards and alarms out of the box:

- API Gateway request volume, error rates, and p99 latency

- Lambda duration, error counts, and throttles

- DynamoDB system health

- AWS X-Ray distributed tracing for end-to-end request visibility

Why These Choices Matter

Every architectural decision in Civic AI Agent is defensible and teachable. We chose Amazon Bedrock Agents over building our own orchestration layer because the agent primitive is where AWS is investing. Guardrails, and knowledge base integration are first-class features, not glue code we have to maintain. We chose CDK over console configuration because infrastructure that only exists as clicks in a console can’t be code-reviewed, can’t be diffed, and can’t be reproduced from scratch — and that means your team doesn’t really own it. We chose aliases and stages over duplicated stacks because that is how AWS designed these services to support multiple environments.

For a technical buyer, this matters in two ways. First, nothing here is exotic. Any engineer fluent in AWS serverless patterns can read the CDK, understand the Bedrock Agents configuration, and extend the system. Second, every pattern in this build is one we teach in our instructor-led classes. When we hand this system to your team, they are not inheriting a black box. They are inheriting a reference implementation of the same patterns they will see in training.

AWS Well-Architected Framework Alignment

Civic AI Agent is built to the AWS Well-Architected Framework across all six pillars:

| AWS Well-Architected Pillar | How We Address It |

|---|---|

| Operational Excellence | AWS CDK infrastructure as code, CI/CD via Amplify and CodePipeline, CloudWatch dashboards and alarms |

| Security | IAM least-privilege roles, Cognito MFA, Bedrock Guardrails, no hardcoded credentials |

| Reliability | Serverless and multi-AZ managed services |

| Performance Efficiency | Serverless auto-scaling and graceful error handling |

| Cost Optimization | Pay-per-request on Lambda, DynamoDB, and AI model invocation, no idle compute, shared resources across environments |

| Sustainability | Serverless eliminates over-provisioned capacity; shared Bedrock Agents reduces resource duplication |

Deploying for a New City

The architecture is designed to be replicated. Each new customer gets their own AWS account with a full, independent deployment, not a shared multi-tenant system. That means complete data isolation, independent scaling, and no blast radius between customers. Because the entire stack is defined in CDK, standing up a new city is a matter of forking the template, updating a configuration file, and running cdk deploy. A new base environment is live in under an hour. The real work is defining your data sources and how you want your agents to work.

About Tech Reformers

Tech Reformers is an AWS Advanced Services Partner and AWS Authorized Training Partner (ATP). We design and build cloud-native solutions on AWS, and we train the developers who build and the DevOps engineers who maintain them.

Civic AI Agent is both a production product and a reference implementation, every architectural decision demonstrates the AWS patterns our instructors teach in the classroom. When we hand a project to a client’s engineering team, they receive working code and the training to own it.

If you’re a city, school district, or public agency interested in deploying Civic AI Agent, contact us. If you’re an engineering organization looking to upskill your team on AWS serverless and generative AI patterns, see our upcoming instructor-led training.